Chapter 2. Social Cognition

2.2 How We Use Our Expectations

Learning Objectives

- Provide examples of how salience and accessibility influence information processing.

- Review, differentiate, and give examples of some important cognitive heuristics that influence social judgment.

- Summarize and give examples of the importance of social cognition in everyday life.

Once we have developed a set of schemas and attitudes, we naturally use that information to help us evaluate and respond to others. Our expectations help us to think about, size up, and make sense of individuals, groups of people, and the relationships among people. If we have learned, for example, that someone is friendly and interested in us, we are likely to approach them; if we have learned that they are threatening or unlikable, we will be more likely to withdraw. And if we believe that a person has committed a crime, we may process new information in a manner that helps convince us that our judgment was correct. In this section, we will consider how we use our stored knowledge to come to accurate (and sometimes inaccurate) conclusions about our social worlds.

Automatic versus Controlled Cognition

A good part of both cognition and social cognition is spontaneous or automatic. Automatic cognition refers to thinking that occurs out of our awareness, quickly, and without taking much effort (Ferguson & Bargh, 2003; Ferguson, Hassin, & Bargh, 2008). The things that we do most frequently tend to become more automatic each time we do them, until they reach a level where they don’t really require us to think about them very much. Most of us can ride a bike and operate a television remote control in an automatic way. Even though it took some work to do these things when we were first learning them, it just doesn’t take much effort anymore. And because we spend a lot of time making judgments about others, many of these judgments, which are strongly influenced by our schemas, are made quickly and automatically (Willis & Todorov, 2006).

Because automatic thinking occurs outside of our conscious awareness, we frequently have no idea that it is occurring and influencing our judgments or behaviors. You might remember a time when you returned home, unlocked the door, and 30 seconds later couldn’t remember where you had put your keys! You know that you must have used the keys to get in, and you know you must have put them somewhere, but you simply don’t remember a thing about it. Because many of our everyday judgments and behaviors are performed automatically, we may not always be aware that they are occurring or influencing us.

It is of course a good thing that many things operate automatically because it would be extremely difficult to have to think about them all the time. If you couldn’t drive a car automatically, you wouldn’t be able to talk to the other people riding with you or listen to the radio at the same time—you’d have to be putting most of your attention into driving. On the other hand, relying on our snap judgments about Bianca—that she’s likely to be expressive, for instance—can be erroneous. Sometimes we need to—and should—go beyond automatic cognition and consider people more carefully. When we deliberately size up and think about something, for instance, another person, we call it controlled cognition. Although you might think that controlled cognition would be more common and that automatic thinking would be less likely, that is not always the case. The problem is that thinking takes effort and time, and we often don’t have too much of those things available.

In the following Research Focus, we consider an example of automatic cognition in a study that uses a common social cognitive procedure known as priming, a technique in which information is temporarily brought into memory through exposure to situational events, which can then influence judgments entirely out of awareness.

Research Focus

Behavioral Effects of Priming

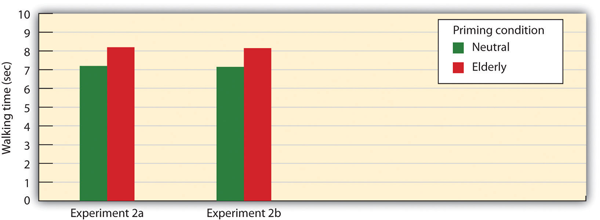

In one demonstration of how automatic cognition can influence our behaviors without us being aware of them, John Bargh and his colleagues (Bargh, Chen, & Burrows, 1996) conducted two studies, each with the exact same procedure. In the experiments, they showed college students sets of five scrambled words. The students were to unscramble the five words in each set to make a sentence. Furthermore, for half of the research participants, the words were related to the stereotype of elderly people. These participants saw words such as “in Florida retired live people” and “bingo man the forgetful plays.”

The other half of the research participants also made sentences but did so out of words that had nothing to do with the elderly stereotype. The purpose of this task was to prime (activate) the schema of elderly people in memory for some of the participants but not for others.

The experimenters then assessed whether the priming of elderly stereotypes would have any effect on the students’ behavior—and indeed it did. When each research participant had gathered all his or her belongings, thinking that the experiment was over, the experimenter thanked him or her for participating and gave directions to the closest elevator. Then, without the participant knowing it, the experimenters recorded the amount of time that the participant spent walking from the doorway of the experimental room toward the elevator. As you can see in Figure 2.8, “Automatic Priming and Behavior,” the same results were found in both experiments—the participants who had made sentences using words related to the elderly stereotype took on the behaviors of the elderly—they walked significantly more slowly (in fact, about 12% more slowly across the two studies) as they left the experimental room.

To determine if these priming effects occurred out of the conscious awareness of the participants, Bargh and his colleagues asked a third group of students to complete the priming task and then to indicate whether they thought the words they had used to make the sentences had any relationship to each other or could possibly have influenced their behavior in any way. These students had no awareness of the possibility that the words might have been related to the elderly or could have influenced their behavior.

The point of these experiments, and many others like them, is clear—it is quite possible that our judgments and behaviors are influenced by our social situations, and this influence may be entirely outside of our conscious awareness. To return again to Bianca, it is even possible that we notice her nationality and that our beliefs about Italians influence our responses to her, even though we have no idea that they are doing so and really believe that they have not.

Salience and Accessibility Determine Which Expectations We Use

We each have a large number of schemas that we might bring to bear on any type of judgment we might make. When thinking about Bianca, for instance, we might focus on her nationality, her gender, her physical attractiveness, her intelligence, or any of many other possible features. And we will react to Bianca differently depending on which schemas we use. Schema activation is determined both by the salience of the characteristics of the person we are judging and by the current activation or cognitive accessibility of the schema.

Salience

One determinant of which schemas are likely to be used in social judgment is the extent to which we attend to particular features of the person or situation that we are responding to. We are more likely to judge people on the basis of characteristics of salience, which attract our attention when we see someone with them. For example, things that are unusual, negative, colorful, bright, and moving are more salient and thus more likely to be attended to than are things that do not have these characteristics (McArthur & Post, 1977; Taylor & Fiske, 1978).

We are more likely to initially judge people on the basis of their sex, race, age, and physical attractiveness, rather than on, say, their religious orientation or their political beliefs, in part because these features are so salient when we see them (Brewer, 1988). Another thing that makes something particularly salient is its infrequency or unusualness. If Bianca is from Italy and very few other people in our community are, that characteristic is something that we notice, it is salient, and we are therefore likely to attend to it. That she is also a woman is, at least in this context, is less salient.

The salience of the stimuli in our social worlds may sometimes lead us to make judgments on the basis of information that is actually less informative than is other less salient information. Imagine, for instance, that you wanted to buy a new smartphone for yourself. You’ve been trying to decide whether to get the iPhone or a rival product. You went online and checked out the reviews, and you found that although the phones differed on many dimensions, including price, battery life, and so forth, the rival product was nevertheless rated significantly higher by the owners than was the iPhone. As a result, you decide to go and purchase one the next day. That night, however, you go to a party, and a friend of yours shows you her iPhone. You check it out, and it seems really great. You tell her that you were thinking of buying a rival product, and she tells you that you are crazy. She says she knows someone who had one and had a lot of problems—it didn’t download music properly, the battery died right after the warranty was up, and so forth, and that she would never buy one. Would you still buy it, or would you switch your plans?

If you think about this question logically, the information that you just got from your friend isn’t really all that important; you now know the opinions of one more person, but that can’t really change the overall consumer ratings of the two machines very much. On the other hand, the information your friend gives you and the chance to use her iPhone are highly salient. The information is right there in front of you, in your hand, whereas the statistical information from reviews is only in the form of a table that you saw on your computer. The outcome in cases such as this is that people frequently ignore the less salient, but more important, information, such as the likelihood that events occur across a large population, known as base rates, in favor of the actually less important, but nevertheless more salient, information.

Another case in which we ignore base-rate information occurs when we use the representativeness heuristic, which occurs when we base our judgments on information that seems to represent, or match, what we expect will happen, while ignoring more informative base-rate information. Consider, for instance, the following puzzle. Let’s say that you went to a hospital this week, and you checked the records of the babies that were born on that day (Table 2.2, “Using the Representativeness Heuristic”). Which pattern of births do you think that you are most likely to find?

| List A | List B | |

|---|---|---|

| 6:31 a.m. | Girl | Boy |

| 8:15 a.m. | Girl | Girl |

| 9:42 a.m. | Girl | Boy |

| 1:13 p.m. | Girl | Girl |

| 3:39 p.m. | Boy | Girl |

| 5:12 p.m. | Boy | Boy |

| 7:42 p.m. | Boy | Girl |

| 11:44 p.m. | Boy | Boy |

Most people think that List B is more likely, probably because it looks more random and thus matches (is “representative of”) our ideas about randomness. But statisticians know that any pattern of four girls and four boys is equally likely and thus that List B is no more likely than List A. The problem is that we have an image of what randomness should be, which doesn’t always match what is rationally the case. Similarly, people who see a coin that comes up heads five times in a row will frequently predict (and perhaps even bet!) that tails will be next—it just seems like it has to be. But mathematically, this erroneous expectation (known as the gambler’s fallacy) is simply not true: the base-rate likelihood of any single coin flip being tails is only 50%, regardless of how many times it has come up heads in the past.

To take one more example, consider the following information:

I have a friend who is analytical, argumentative, and is involved in community activism. Which of the following is she? (Choose one.)

—A lawyer

—A salesperson

Can you see how you might be led, potentially incorrectly, into thinking that my friend is a lawyer? Why? The description (“analytical, argumentative, and is involved in community activism”) just seems more representative or stereotypical of our expectations about lawyers than salespeople. But the base rates tell us something completely different, which should make us wary of that conclusion. Simply put, the number of salespeople greatly outweighs the number of lawyers in society, and thus statistically it is far more likely that she is a salesperson. Nevertheless, the representativeness heuristic will often cause us to overlook such important information. One unfortunate consequence of this is that it can contribute to the maintenance of stereotypes. If someone you meet seems, superficially at least, to represent the stereotypical characteristics of a social group, you may incorrectly classify that person as a member of that group, even when it is highly likely that he or she is not.

Cognitive Accessibility

Although the characteristics that we use to think about objects or people are determined in part by their salience, individual differences in the person who is doing the judging are also important. People vary in the type of schemas that they tend to use when judging others and when thinking about themselves. One way to consider this is in terms of the cognitive accessibility of the schema. Cognitive accessibility refers to the extent to which a schema is activated in memory and thus likely to be used in information processing. Simply put, the schemas we tend to typically use are often those that are most accessible to us.

You probably know people who are football nuts (or maybe tennis or some other sport nuts). All they can talk about is football. For them, we would say that football is a highly accessible construct. Because they love football, it is important to their self-concept; they set many of their goals in terms of the sport, and they tend to think about things and people in terms of it (“If he plays or watches football, he must be okay!”). Other people have highly accessible schemas about eating healthy food, exercising, environmental issues, or really good coffee, for instance. In short, when a schema is accessible, we are likely to use it to make judgments of ourselves and others.

Although accessibility can be considered a person variable (a given idea is more highly accessible for some people than for others), accessibility can also be influenced by situational factors. When we have recently or frequently thought about a given topic, that topic becomes more accessible and is likely to influence our judgments. This is in fact a potential explanation for the results of the priming study you read about earlier—people walked slower because the concept of elderly had been primed and thus was currently highly accessible for them.

Because we rely so heavily on our schemas and attitudes, and particularly on those that are salient and accessible, we can sometimes be overly influenced by them. Imagine, for instance, that I asked you to close your eyes and determine whether there are more words in the English language that begin with the letter R or that have the letter R as the third letter. You would probably try to solve this problem by thinking of words that have each of the characteristics. It turns out that most people think there are more words that begin with R, even though there are in fact more words that have R as the third letter.

You can see that this error can occur as a result of cognitive accessibility. To answer the question, we naturally try to think of all the words that we know that begin with R and that have R in the third position. The problem is that when we do that, it is much easier to retrieve the former than the latter, because we store words by their first, not by their third, letter. We may also think that our friends are nice people because we see them primarily when they are around us (their friends). And the traffic might seem worse in our own neighborhood than we think it is in other places, in part because nearby traffic jams are more accessible for us than are traffic jams that occur somewhere else. And do you think it is more likely that you will be killed in a plane crash or in a car crash? Many people fear the former, even though the latter is much more likely: statistically, your chances of being involved in an aircraft accident are far lower than being killed in an automobile accident. In this case, the problem is that plane crashes, which are highly salient, are more easily retrieved from our memory than are car crashes, which often receive far less media coverage.

The tendency to make judgments of the frequency of an event, or the likelihood that an event will occur, on the basis of the ease with which the event can be retrieved from memory is known as the availability heuristic (Schwarz & Vaughn, 2002; Tversky & Kahneman, 1973). The idea is that things that are highly accessible (in this case, the term availability is used) come to mind easily and thus may overly influence our judgments. Thus, despite the clear facts, it may be easier to think of plane crashes than of car crashes because the former are more accessible. If so, the availability heuristic can lead to errors in judgments.

For example, as people tend to overestimate the risk of rare but dramatic events, including plane crashes and terrorist attacks, their responses to these estimations may not always be proportionate to the true risks. For instance, it has been widely documented that fewer people chose to use air travel in the aftermath of the September 11, 2001 (9/11), terrorist attacks on the World Trade Center, particularly in the United States. Correspondingly, many individuals chose other methods of travel, often electing to drive rather than fly to their destination. Statistics across all regions of the world confirm that driving is far more dangerous than flying, and this prompted the cognitive psychologist Gerd Gigerenzer to estimate how many extra deaths that the increased road traffic following 9/11 might have caused. He arrived at an estimate of around an additional 1,500 road deaths in the United States alone in the year following those terrorist attacks, which was six times the number of people killed on the airplanes on September 11, 2001 (Gigerenzer, 2006).

Another way that the cognitive accessibility of constructs can influence information processing is through their effects on processing fluency. Processing fluency refers to the ease with which we can process information in our environments. When stimuli are highly accessible, they can be quickly attended to and processed, and they therefore have a large influence on our perceptions. This influence is due, in part, to the fact that we often react positively to information that we can process quickly, and we use this positive response as a basis of judgment (Reber, Winkielman, & Schwarz, 1998; Winkielman & Cacioppo, 2001).

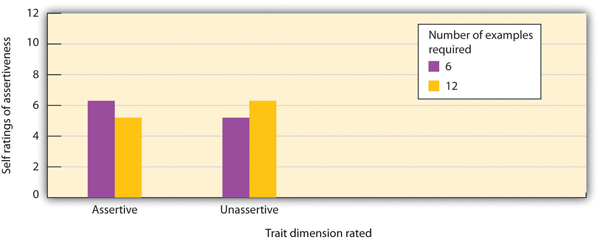

In one study demonstrating this effect, Norbert Schwarz and his colleagues (Schwarz et al., 1991) asked one set of college students to list six occasions when they had acted either assertively or unassertively, and asked another set of college students to list 12 such examples. Schwarz determined that for most students, it was pretty easy to list six examples but pretty hard to list 12.

The researchers then asked the participants to indicate how assertive or unassertive they actually were. You can see from Figure 2.10, “Processing Fluency,” that the ease of processing influenced judgments. The participants who had an easy time listing examples of their behavior (because they only had to list six instances) judged that they did in fact have the characteristics they were asked about (either assertive or unassertive), in comparison with the participants who had a harder time doing the task (because they had to list 12 instances). Other research has found similar effects—people rate that they ride their bicycles more often after they have been asked to recall only a few rather than many instances of doing so (Aarts & Dijksterhuis, 1999), and they hold an attitude with more confidence after being asked to generate few rather than many arguments that support it (Haddock, Rothman, Reber, & Schwarz, 1999). Sometimes less really is more!

Echoing the findings mentioned earlier in relation to schemas, we are likely to use this type of quick and “intuitive” processing, based on our feelings about how easy it is to complete a task, when we don’t have much time or energy for more in-depth processing, such as when we are under time pressure, tired, or unwilling to process the stimulus in sufficient detail. Of course, it is very adaptive to respond to stimuli quickly (Sloman, 2002; Stanovich & West, 2002; Winkielman, Schwarz, & Nowak, 2002), and it is not impossible that in at least some cases, we are better off making decisions based on our initial responses than on a more thoughtful cognitive analysis (Loewenstein, Weber, Hsee, & Welch, 2001). For instance, Dijksterhuis, Bos, Nordgren, and van Baaren (2006) found that when participants were given tasks requiring decisions that were very difficult to make on the basis of a cognitive analysis of the problem, they made better decisions when they didn’t try to analyze the details carefully but simply relied on their intuitions.

In sum, people are influenced not only by the information they get but on how they get it. We are more highly influenced by things that are salient and accessible and thus easily attended to, remembered, and processed. On the other hand, information that is harder to access from memory, is less likely to be attended to, or takes more effort to consider is less likely to be used in our judgments, even if this information is statistically more informative.

The False Consensus Bias Makes Us Think That Others Are More Like Us Than They Really Are

The tendency to base our judgments on the accessibility of social constructs can lead to still other errors in judgment. One such error is known as the false consensus bias, the tendency to overestimate the extent to which other people hold similar views to our own. As our own beliefs are highly accessible to us, we tend to rely on them too heavily when asked to predict those of others. For instance, if you are in favor of abortion rights and opposed to capital punishment, then you are likely to think that most other people share these beliefs (Ross, Greene, & House, 1977). In one demonstration of the false consensus bias, Joachim Krueger and his colleagues (Krueger & Clement, 1994) gave their research participants, who were college students, a personality test. Then they asked the same participants to estimate the percentage of other students in their school who would have answered the questions the same way that they did. The students who agreed with the items often thought that others would agree with them too, whereas the students who disagreed typically believed that others would also disagree. A closely related bias to the false consensus effect is the projection bias, which is the tendency to assume that others share our cognitive and affective states (Hsee, Hastie, & Chen, 2008).

In regards to our chapter case study, the false consensus effect has also been implicated in the potential causes of the 2008 financial collapse. Considering investor behavior within its social context, an important part of sound decision making is the ability to predict other investors’ intentions and behaviors, as this will help to foresee potential market trends. In this context, Egan, Merkle, and Weber (in press) outline how the false consensus effect can lead investors to overestimate the extent to which other investors share their judgments about the likely trends, which can in turn lead them to make inaccurate predictions of their behavior, with dire economic consequences.

Although it is commonly observed, the false consensus bias does not occur on all dimensions. Specifically, the false consensus bias is not usually observed on judgments of positive personal traits that we highly value as important. People (falsely, of course) report that they have better personalities (e.g., a better sense of humor), that they engage in better behaviors (e.g., they are more likely to wear seatbelts), and that they have brighter futures than almost everyone else (Chambers, 2008). These results suggest that although in most cases we assume that we are similar to others, in cases of valued personal characteristics the goals of self-concern lead us to see ourselves more positively than we see the average person. There are some important cultural differences here, though, with members of collectivist cultures typically showing less of this type of self-enhancing bias, than those from individualistic cultures (Heine, Lehman, Markus, & Kitayama, 1999).

Perceptions of What “Might Have Been” Lead to Counterfactual Thinking

In addition to influencing our judgments about ourselves and others, the salience and accessibility of information can have an important effect on our own emotions and self-esteem. Our emotional reactions to events are often colored not only by what did happen but also by what might have happened. If we can easily imagine an outcome that is better than what actually happened, then we may experience sadness and disappointment; on the other hand, if we can easily imagine that a result might have been worse that what actually happened, we may be more likely to experience happiness and satisfaction. The tendency to think about events according to what might have been is known as counterfactual thinking (Roese, 1997).

Imagine, for instance, that you were participating in an important contest, and you won the silver medal. How would you feel? Certainly you would be happy that you won, but wouldn’t you probably also be thinking a lot about what might have happened if you had been just a little bit better—you might have won the gold medal! On the other hand, how might you feel if you won the bronze medal (third place)? If you were thinking about the counterfactual (the “what might have been”), perhaps the idea of not getting any medal at all would have been highly accessible and so you’d be happy that you got the medal you did get.

Medvec, Madey, and Gilovich (1995) investigated exactly this idea by videotaping the responses of athletes who won medals in the 1992 summer Olympic Games. They videotaped the athletes both as they learned that they had won a silver or a bronze medal and again as they were awarded the medal. Then they showed these videos, without any sound, to people who did not know which medal which athlete had won. The raters indicated how they thought the athlete was feeling, on a range from “agony” to “ecstasy.” The results showed that the bronze medalists did indeed seem to be, on average, happier than were the silver medalists. Then, in a follow-up study, raters watched interviews with many of these same athletes as they talked about their performance. The raters indicated what we would expect on the basis of counterfactual thinking. The silver medalists often talked about their disappointments in having finished second rather than first, whereas the bronze medalists tended to focus on how happy they were to have finished third rather than fourth.

Counterfactual thinking seems to be part of the human condition and has even been studied in numerous other social settings, including juries. For example, people who were asked to award monetary damages to others who had been in an accident offered them substantially more in compensation if they were almost not injured than they did if the accident seemed more inevitable (Miller, Turnbull, & McFarland, 1988).

Again, the moral of the story regarding the importance of cognitive accessibility is clear—in the case of counterfactual thinking, the accessibility of the potential alternative outcome can lead to some seemingly paradoxical effects.

Anchoring and Adjustment Lead Us to Accept Ideas That We Should Revise

In some cases, we may be aware of the danger of acting on our expectations and attempt to adjust for them. Perhaps you have been in a situation where you are beginning a course with a new professor and you know that a good friend of yours does not like him. You may be thinking that you want to go beyond your negative expectation and prevent this knowledge from biasing your judgment. However, the accessibility of the initial information frequently prevents this adjustment from occurring—leading us to weight initial information too heavily and thereby insufficiently move our judgment away from it. This is called the problem of anchoring and adjustment.

Tversky and Kahneman (1974) asked some of the student participants in one of their studies of anchoring and adjustment to solve this multiplication problem quickly and without using a calculator:

1 × 2 × 3 × 4 × 5 × 6 × 7 × 8

They asked other participants to solve this problem:

8 × 7 × 6 × 5 × 4 × 3 × 2 × 1

They found that students who saw the first problem gave an estimated answer of about 512, whereas the students who saw the second problem estimated about 2,250. Tversky and Kahneman argued that the students couldn’t solve the whole problem in their head, so they did the first few multiplications and then used the outcome of this preliminary calculation as their starting point, or anchor. Then the participants used their starting estimate to find an answer that sounded plausible. In both cases, the estimates were too low relative to the true value of the product (which is 40,320)—but the first set of guesses were even lower because they started from a lower anchor.

Interestingly, the tendency to anchor on initial information seems to be sufficiently strong that in some cases, people will do so even when the anchor is clearly irrelevant to the task at hand. For example, Ariely, Loewenstein, and Prelec (2003) asked students to bid on items in an auction after having noted the last two digits of their social security numbers. They then asked the students to generate and write down a hypothetical price for each of the auction items, based on these numbers. If the last two digits were 11, then the bottle of wine, for example, was priced at $11. If the two numbers were 88, the textbook was $88. After they wrote down this initial, arbitrary price, they then had to bid for the item. People with high numbers bid up to 346% more than those with low ones! Ariely, reflecting further on these findings, concluded that the “Social security numbers were the anchor in this experiment only because we requested them. We could have just as well asked for the current temperature or the manufacturer’s suggested retail price. Any question, in fact, would have created the anchor. Does that seem rational? Of course not” (2008, p. 26). A rather startling conclusion from the effect of arbitrary, irrelevant anchors on our judgments is that we will often grab hold of any available information to guide our judgments, regardless of whether it is actually germane to the issue.

Of course, savvy marketers have long used the anchoring phenomenon to help them. You might not be surprised to hear that people are more likely to buy more products when they are listed as four for $1.00 than when they are listed as $0.25 each (leading people to anchor on the four and perhaps adjust only a bit away). And it is no accident that a car salesperson always starts negotiating with a high price and then works down. The salesperson is trying to get the consumer anchored on the high price, with the hope that it will have a big influence on the final sale value.

Overconfidence

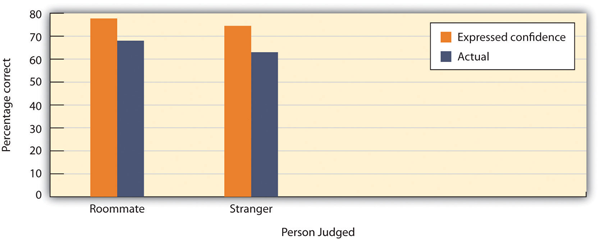

Still another potential judgmental bias, and one that has powerful and often negative effects on our judgments, is the overconfidence bias, a tendency to be overconfident in our own skills, abilities, and judgments. We often have little awareness of our own limitations, leading us to act as if we are more certain about things than we should be, particularly on tasks that are difficult. Adams and Adams (1960) found that for words that were difficult to spell, people were correct in spelling them only about 80% of the time, even though they indicated that they were “100% certain” that they were correct. David Dunning and his colleagues (Dunning, Griffin, Milojkovic, & Ross, 1990) asked college students to predict how another student would react in various situations. Some participants made predictions about a fellow student whom they had just met and interviewed, and others made predictions about their roommates. In both cases, participants reported their confidence in each prediction, and accuracy was determined by the responses of the target persons themselves. The results were clear: regardless of whether they judged a stranger or a roommate, the students consistently overestimated the accuracy of their own predictions (Figure 2.12).

Making matters even worse, Kruger and Dunning (1999) found that people who scored low rather than high on tests of spelling, logic, grammar, and humor appreciation were also most likely to show overconfidence by overestimating how well they would do. Apparently, poor performers are doubly cursed—they not only are unable to predict their own skills but also are the most unaware that they can’t do so (Dunning, Johnson, Ehrlinger, & Kruger, 2003).

The tendency to be overconfident in our judgments can have some very negative effects. When eyewitnesses testify in courtrooms regarding their memories of a crime, they often are completely sure that they are identifying the right person. But their confidence doesn’t correlate much with their actual accuracy. This is, in part, why so many people have been wrongfully convicted on the basis of inaccurate eyewitness testimony given by overconfident witnesses (Wells & Olson, 2003). Overconfidence can also spill over into professional judgments, for example, in clinical psychology (Oskamp, 1965) and in market investment and trading (Chen, Kim, Nofsinger, & Rui, 2007). Indeed, in regards to our case study at the start of this chapter, the role of overconfidence bias in the financial crisis of 2008 and its aftermath has been well documented (Abbes, 2012).

This overconfidence also often seems to apply to social judgments about the future in general. A pervasive optimistic biashas been noted in members of many cultures (Sharot, 2011), which can be defined as a tendency to believe that positive outcomes are more likely to happen than negative ones, particularly in relation to ourselves versus others. Importantly, this optimism is often unwarranted. Most people, for example, underestimate their risk of experiencing negative events like divorce and illness, and overestimate the likelihood of positive ones, including gaining a promotion at work or living to a ripe old age (Schwarzer, 1994). There is some evidence of diversity in regards to optimism, however, across different groups. People in collectivist cultures tend not to show this bias to the same extent as those living in individualistic ones (Chang, Asakawa, & Sanna, 2001). Moreover, individuals who have clinical depression have been shown to evidence a phenomenon termed depressive realism, whereby their social judgments about the future are less positively skewed and often more accurate than those who do not have depression (Moore & Fresco, 2012).

The optimistic bias can also extend into the planning fallacy, defined as a tendency to overestimate the amount that we can accomplish over a particular time frame. This fallacy can also entail the underestimation of the resources and costs involved in completing a task or project, as anyone who has attempted to budget for home renovations can probably attest to. Everyday examples of the planning fallacy abound, in everything from the completion of course assignments to the construction of new buildings. On a grander scale, newsworthy items in any country hosting a major sporting event, for example, the Olympics or World Cup soccer always seem to include the spiralling budgets and overrunning timelines as the events approach.

Why is the planning fallacy so persistent? Several factors appear to be at work here. Buehler, Griffin and Peetz (2010) argue that when planning projects, individuals orient to the future and pay too little attention to their past relevant experiences. This can cause them to overlook previous occasions where they experienced difficulties and over-runs. They also tend to plan for what time and resources are likely to be needed, if things run as planned. That is, they do not spend enough time thinking about all the things that might go wrong, for example, all the unforeseen demands on their time and resources that may occur during the completion of the task. Worryingly, the planning fallacy seems to be even stronger for tasks where we are highly motivated and invested in timely completions. It appears that wishful thinking is often at work here (Buehler et al., 2010). For some further perspectives on the advantages and disadvantages of the optimism bias, see this engaging TED Talk by Tali Sharot.

If these biases related to overconfidence appear at least sometimes to lead us to inaccurate social judgments, a key question here is why are they so pervasive? What functions do they serve? One possibility is that they help to enhance people’s motivation and self-esteem levels. If we have a positive view of our abilities and judgments, and are confident that we can execute tasks to deadlines, we will be more likely to attempt challenging projects and to put ourselves forward for demanding opportunities. Moreover, there is consistent evidence that a mild degree of optimism can predict a range of positive outcomes, including success and even physical health (Forgeard & Seligman, 2012).

The Importance of Cognitive Biases in Everyday Life

In our review of some of the many cognitive biases that affect our social judgment, we have seen that the effects on us as individuals range from fairly trivial decisions; for example, which phone to buy (which perhaps doesn’t seem so trivial at the time) to potentially life and death decisions (about methods of travel, for instance).

However, when we consider that many of these errors will not only affect us but also everyone around us, then their consequences can really add up. Why would so many people continue to buy lottery tickets or to gamble their money in casinos when the likelihood of them ever winning is so low? One possibility, of course, is the representative heuristic—people ignore the low base rates of winning and focus their attention on the salient likelihood of winning a huge prize. And the belief in astrology, which all scientific evidence suggests is not accurate, is probably driven in part by the salience of the occasions when the predictions do occur—when a horoscope is correct (which it will of course sometimes be), the correct prediction is highly salient and may allow people to maintain the (overall false) belief as they recollect confirming evidence more readily.

People may also take more care to prepare for unlikely events than for more likely ones because the unlikely ones are more salient or accessible. For instance, people may think that they are more likely to die from a terrorist attack or as the result of a homicide than they are from diabetes, stroke, or tuberculosis. But the odds are much greater of dying from the health problems than from terrorism or homicide. Because people don’t accurately calibrate their behaviors to match the true potential risks, the individual and societal costs are quite large (Slovic, 2000).

As well as influencing our judgments relating to ourselves, salience and accessibility also color how we perceive our social worlds, which may have a big influence on our behavior. For instance, people who watch a lot of violent television shows also tend to view the world as more dangerous in comparison to those who watch less violent TV (Doob & Macdonald, 1979). This follows from the idea that our judgments are based on the accessibility of relevant constructs. We also overestimate our contribution to joint projects (Ross & Sicoly, 1979), perhaps in part because our own contributions are so obvious and salient, whereas the contributions of others are much less so. And the use of cognitive heuristics can even affect our views about global warming. Joireman, Barnes, Truelove, and Duell (2010) found that people were more likely to believe in the existence of global warming when they were asked about it on hotter rather than colder days and when they had first been primed with words relating to heat. Thus the principles of salience and accessibility, because they are such an important part of our social judgments, can create a series of biases that can make a difference on a truly global level.

As we have already seen specifically in relation to overconfidence, research has found that even people who should know better—and who need to know better—are subject to cognitive biases in general. Economists, stock traders, managers, lawyers, and even doctors have been found to make the same kinds of mistakes in their professional activities that people make in their everyday lives (Byrne & McEleney, 2000; Gilovich, Griffin, & Kahneman, 2002; Hilton, 2001). And the use of cognitive heuristics is increased when people are under time pressure (Kruglanski & Freund, 1983) or when they feel threatened (Kassam, Koslov, & Mendes, 2009), exactly the situations that often occur when professionals are required to make their decisions.

Biased About Our Biases: The Bias Blind Spot

So far, we have discussed some of the most important and heavily researched social cognitive biases that affect our appraisals of ourselves in relation to our social worlds and noted some of their key limitations. Recently, some social psychologists have become interested in how aware we are of how these biases and the ways in which they can affect our own and others’ thinking. The short answer to this is that we often underestimate the extent to which our social cognition is biased, and that we typically (incorrectly) believe that we are less biased than the average person. Researchers have named this tendency to believe that our own judgments are less susceptible to the influence of bias than those of others as the bias blind spot (Ehrlinger, Gilovich, & Ross, 2005). Interestingly, the level of bias blind spot that people demonstrate is unrelated to the actual amount of bias they show in their social judgments (West, Meserve, & Stanovich, 2012). Moreover, those scoring higher in cognitive ability actually tend to show a larger bias blind spot (West et al., 2012).

So, if our social cognition appears to be riddled with multiple biases, and we tend to show biases about these biases, what hope is there for us in reaching sound social judgments? Before we arrive at such a pessimistic conclusion, however, it is important to redress the balance of evidence a little. Perhaps just learning more about these biases, as we have done in this chapter, can help us to recognize when they are likely to be useful to our social judgments, and to take steps to reduce their effects when they hinder our understanding of our social worlds. Maybe, although many of the biases discussed tend to persist even in the face of our awareness, at the very least, learning about them could be an important first step toward reducing their unhelpful effects on our social cognition. In order to get reliably better at policing our biases, though, we probably need to go further. One of the world’s foremost authorities on social cognitive biases, Nobel Laureate Daniel Kahneman, certainly thinks so. He argues that individual awareness of biases is an important precursor to the development of a common vocabulary about them, that will then make us better able as communities to discuss their effects on our social judgments (Kahneman, 2011). Kahneman also asserts that we may be more likely to recognize and challenge bias in each other’s thinking than in our own, an observation that certainly fits with the concept of the bias blind spot. Perhaps, even if we cannot effectively police our thinking on our own, we can help to police one another’s.

These arguments are consistent with some evidence that, although mere awareness is rarely enough to significantly attenuate the effects of bias, it can be helpful when accompanied by systematic cognitive retraining. Many social psychologists and other scientists are working to help people make better decisions. One possibility is to provide people with better feedback. Weather forecasters, for instance, are quite accurate in their decisions (at least in the short-term), in part because they are able to learn from the clear feedback that they get about the accuracy of their predictions. Other research has found that accessibility biases can be reduced by leading people to consider multiple alternatives rather than focusing only on the most obvious ones, and by encouraging people to think about exactly the opposite possible outcomes than the ones they are expecting (Hirt, Kardes, & Markman, 2004). And certain educational experiences can help people to make better decisions. For instance, Lehman, Lempert, and Nisbett (1988) found that graduate students in medicine, law, and chemistry, and particularly those in psychology, all showed significant improvement in their ability to reason correctly over the course of their graduate training.

Another source for some optimism about the accuracy of our social cognition is that these heuristics and biases can, despite their limitations, often lead us to a broadly accurate understanding of the situations we encounter. Although we do have limited cognitive abilities, information, and time when making social judgments, that does not mean we cannot and do not make enough sense of our social worlds in order to function effectively in our daily lives. Indeed, some researchers, including Cosmides and Tooby (2000) and Gigerenzer (2004) have argued that these biases and heuristics have been sculpted by evolutionary forces to offer fast and frugal ways of reaching sound judgments about our infinitely complex social worlds enough of the time to have adaptive value. If, for example, you were asked to say which Spanish city had a larger population, Madrid or Valencia, the chances are you would quickly answer that Madrid was bigger, even if you did not know the relevant population figures. Why? Perhaps the availability heuristic and cognitive accessibility had something to do with it—the chances are that most people have just heard more about Madrid in the global media over the years, and they can more readily bring these instances to mind. From there, it is a short leap to the general rule that larger cities tend to get more media coverage. So, although our journeys to our social judgments may not be always be pretty, at least we often arrive at the right destination.

H5P: TEST YOUR LEARNING: CHAPTER 2 DRAG THE WORDS – BIASES AND HEURISTICS

To check your learning of some key concepts so far, match each of the scenarios below to one of the following social cognitive biases or heuristics that we have covered in this chapter. Match the concept that you think is most clearly shown in each scenario into the correct box.

Concepts: overconfidence bias, availability heuristic, bias blind spot, false consensus bias, confirmation bias

Scenarios:

- After the 9/11 terrorist attacks in the U.S., it has been estimated that more people were killed in road accidents when driving instead of flying to their destinations than were killed in those terrorist attacks themselves. Which bias or heuristic is most relevant to people’s overestimation of the risk of another airborne terrorist attack?

- The ship RMS Titanic was fitted with fewer than half the lifeboat capacity needed to save her passengers and crew. Part of the reason for this was that she had been designed to be practically unsinkable, and no-one could envisage an event which would cause her to founder. On April 14th, 1912, Titanic collided with an iceberg on her maiden voyage, and sank with the loss of more than 1500 lives. Which bias or heuristic is most relevant to this underestimation of the dangers?

- In a recent news item, a federal judge defended his ability to impartially preside over a case involving a close friend. Many others could see that his impartiality had been compromised. Which bias or heuristic is most relevant to the judge’s inability to see this?

- During the COVID-19 pandemic, many people have used search engines to find support for their beliefs around things like the effectiveness of mask wearing and social distancing. Which bias or heuristic is most relevant to these types of information searches?

- People who talk about political candidates they like are sometimes surprised when the others around them do not share their views, which can lead to some heated arguments! Which bias or heuristic is most relevant to people being surprised here?

Social Psychology in the Public Interest

The Validity of Eyewitness Testimony

One social situation in which the accuracy of our person-perception skills is vitally important is the area of eyewitness testimony (Charman & Wells, 2007; Toglia, Read, Ross, & Lindsay, 2007; Wells, Memon, & Penrod, 2006). Every year, thousands of individuals are charged with and often convicted of crimes based largely on eyewitness evidence. In fact, many people who were convicted prior to the existence of forensic DNA have now been exonerated by DNA tests, and more than 75% of these people were victims of mistaken eyewitness identification (Wells, Memon, & Penrod, 2006; Fisher, 2011).

The judgments of eyewitnesses are often incorrect, and there is only a small correlation between how accurate and how confident an eyewitness is. Witnesses are frequently overconfident, and a person who claims to be absolutely certain about his or her identification is not much more likely to be accurate than someone who appears much less sure, making it almost impossible to determine whether a particular witness is accurate or not (Wells & Olson, 2003).

To accurately remember a person or an event at a later time, we must be able to accurately see and store the information in the first place, keep it in memory over time, and then accurately retrieve it later. But the social situation can influence any of these processes, causing errors and biases.

In terms of initial encoding of the memory, crimes normally occur quickly, often in situations that are accompanied by a lot of stress, distraction, and arousal. Typically, the eyewitness gets only a brief glimpse of the person committing the crime, and this may be under poor lighting conditions and from far away. And the eyewitness may not always focus on the most important aspects of the scene. Weapons are highly salient, and if a weapon is present during the crime, the eyewitness may focus on the weapon, which would draw his or her attention away from the individual committing the crime (Steblay, 1997). In one relevant study, Loftus, Loftus, and Messo (1987) showed people slides of a customer walking up to a bank teller and pulling out either a pistol or a checkbook. By tracking eye movements, the researchers determined that people were more likely to look at the gun than at the checkbook and that this reduced their ability to accurately identify the criminal in a lineup that was given later.

People may be particularly inaccurate when they are asked to identify members of a race other than their own (Brigham, Bennett, Meissner, & Mitchell, 2007). In one field study, for example, Meissner and Brigham (2001) sent European-American, African-American, and Hispanic students into convenience stores in El Paso, Texas. Each of the students made a purchase, and the researchers came in later to ask the clerks to identify photos of the shoppers. Results showed that the clerks demonstrated the own-race bias: they were all more accurate at identifying customers belonging to their own racial or ethnic group, which may be more salient to them, than they were at identifying people from other groups. There seems to be some truth to the adage that “They all look alike”—at least if an individual is looking at someone who is not of his or her own race.

Even if information gets encoded properly, memories may become distorted over time. For one thing, people might discuss what they saw with other people, or they might read information relating to it from other bystanders or in the media. Such postevent information can distort the original memories such that the witnesses are no longer sure what the real information is and what was provided later. The problem is that the new, inaccurate information is highly cognitively accessible, whereas the older information is much less so. The reconstructive memory bias suggests that the memory may shift over time to fit the individual’s current beliefs about the crime. Even describing a face makes it more difficult to recognize the face later (Dodson, Johnson, & Schooler, 1997).

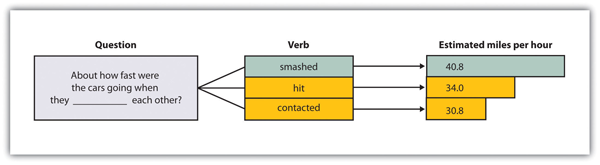

In an experiment by Loftus and Palmer (1974), participants viewed a film of a traffic accident and then, according to random assignment to experimental conditions, answered one of three questions:

- About how fast were the cars going when they hit each other?

- About how fast were the cars going when they smashed each other?

- About how fast were the cars going when they contacted each other?

As you can see in in the Figure 2.14, “Reconstructive Memory,” although all the participants saw the same accident, their estimates of the speed of the cars varied by condition. People who had seen the “smashed” question estimated the highest average speed, and those who had seen the “contacted” question estimated the lowest.

Participants viewed a film of a traffic accident and then answered a question about the accident. According to random assignment, the blank was filled by either “hit,” “smashed,” or “contacted” each other. The wording of the question influenced the participants’ memory of the accident. Data are from Loftus and Palmer (1974).

The situation is particularly problematic when the eyewitnesses are children, because research has found that children are more likely to make incorrect identifications than are adults (Pozzulo & Lindsay, 1998) and are also subject to the own-race identification bias (Pezdek, Blandon-Gitlin, & Moore, 2003). In many cases, when sex abuse charges have been filed against babysitters, teachers, religious officials, and family members, the children are the only source of evidence. The possibility that children are not accurately remembering the events that have occurred to them creates substantial problems for the legal system.

Another setting in which eyewitnesses may be inaccurate is when they try to identify suspects from mug shots or lineups. A lineup generally includes the suspect and five to seven other innocent people (the fillers), and the eyewitness must pick out the true perpetrator. The problem is that eyewitnesses typically feel pressured to pick a suspect out of the lineup, which increases the likelihood that they will mistakenly pick someone (rather than no one) as the suspect.

Research has attempted to better understand how people remember and potentially misremember the scenes of and people involved in crimes and to attempt to improve how the legal system makes use of eyewitness testimony. In many states, efforts are being made to better inform judges, juries, and lawyers about how inaccurate eyewitness testimony can be. Guidelines have also been proposed to help ensure that child witnesses are questioned in a nonbiasing way (Poole & Lamb, 1998). Steps can also be taken to ensure that lineups yield more accurate eyewitness identifications. Lineups are more fair when the fillers resemble the suspect, when the interviewer makes it clear that the suspect might or might not be present (Steblay, Dysart, Fulero, & Lindsay, 2001), and when the eyewitness has not been shown the same pictures in a mug-shot book prior to the lineup decision. And several recent studies have found that witnesses who make accurate identifications from a lineup reach their decision faster than do witnesses who make mistaken identifications, suggesting that authorities must take into consideration not only the response but how fast it is given (Dunning & Perretta, 2002).

In addition to distorting our memories for events that have actually occurred, misinformation may lead us to falsely remember information that never occurred. Loftus and her colleagues asked parents to provide them with descriptions of events that did happen (e.g., moving to a new house) and did not happen (e.g., being lost in a shopping mall) to their children. Then (without telling the children which events were real or made up) the researchers asked the children to imagine both types of events. The children were instructed to “think really hard” about whether the events had occurred (Ceci, Huffman, Smith, & Loftus, 1994). More than half of the children generated stories regarding at least one of the made-up events, and they remained insistent that the events did in fact occur even when told by the researcher that they could not possibly have occurred (Loftus & Pickrell, 1995). Even college students are susceptible to manipulations that make events that did not actually occur seem as if they did (Mazzoni, Loftus, & Kirsch, 2001).

The ease with which memories can be created or implanted is particularly problematic when the events to be recalled have important consequences. Therapists often argue that patients may repress memories of traumatic events they experienced as children, such as childhood sexual abuse, and then recover the events years later as the therapist leads them to recall the information—for instance, by using dream interpretation and hypnosis (Brown, Scheflin, & Hammond, 1998).

But other researchers argue that painful memories such as sexual abuse are usually very well remembered, that few memories are actually repressed, and that even if they are, it is virtually impossible for patients to accurately retrieve them years later (McNally, Bryant, & Ehlers, 2003; Pope, Poliakoff, Parker, Boynes, & Hudson, 2007). These researchers have argued that the procedures used by the therapists to “retrieve” the memories are more likely to actually implant false memories, leading the patients to erroneously recall events that did not actually occur. Because hundreds of people have been accused, and even imprisoned, on the basis of claims about “recovered memory” of child sexual abuse, the accuracy of these memories has important societal implications. Many psychologists now believe that most of these claims of recovered memories are due to implanted, rather than real, memories (Loftus & Ketcham, 1994).

Taken together, then, the problems of eyewitness testimony represent another example of how social cognition—including the processes that we use to size up and remember other people—may be influenced, sometimes in a way that creates inaccurate perceptions, by the operation of salience, cognitive accessibility, and other information-processing biases.

H5P: TEXT YOUR LEARNING: CHAPTER 2 FIILL IN THE BLANKS – COVID-19 AND FAMILY DYNAMICS CASE STUDY

Use your understanding of some key social cognitive biases and heuristics to identify which one is most relevant to each part of the case study below. Please choose from the following alternatives, using each one only once, by typing it in the correct box: overconfidence bias, optimistic bias, counterfactual thinking, confirmation bias, false consensus bias, reconstructive memory bias, planning fallacy, anchoring and adjustment, availability heuristic.

- Cole and Kristin have been married ten years and have two school-age children. Like so many families around the world during the COVID-19 pandemic, they have had many new stressors to cope with. One has been their different beliefs about COVID-19. In the early months of the pandemic, Cole thought that it didn’t seem to be much more serious than the flu and was skeptical about things like needing to wear a mask. Kristin, on the other hand, was more frightened of getting the virus and wore a mask indoors long before it was mandatory. The search histories on their laptops are very different around COVID issues, including mask wearing. Cole’s had search terms like: “why wearing masks doesn’t work”, whereas Kristin’s had things like: “why wearing a mask could save your life”. What concept is especially relevant to their different search histories here? Choose one from the following alternatives: overconfidence bias, optimistic bias, counterfactual thinking, confirmation bias, false consensus bias, reconstructive memory bias, planning fallacy, anchoring and adjustment, availability heuristic.

- There have been many arguments in their household about their different beliefs, which has sometimes put a strain on their relationship. In a particularly heated debate around mask wearing, Cole mentioned a recent headline where someone who’d worn a mask at work every day at work had nonetheless contracted COVID-19. Kristin said she did not remember that headline, but did note some media stories of indoor outbreaks linked to people not wearing masks. Their tendency to remember headlines that fitted their current beliefs is most linked to which concept? Choose one from the following alternatives: overconfidence bias, optimistic bias, counterfactual thinking, confirmation bias, false consensus bias, reconstructive memory bias, planning fallacy, anchoring and adjustment, availability heuristic.

- As mask wearing became mandatory in indoor public spaces, Cole reluctantly adopted this practice. However, he noticed that the third wave of cases in their local area happened months after this mandate was introduced. He mentioned this to Kristin, saying “How is it that even with all this mask wearing, here we are with the case numbers increasing so fast?” Kristin replied that this wasn’t a fair question, as the number of cases that would have occurred if mask wearing had not been mandatory could have been much higher. Cole responded by saying that there was no way of knowing that for sure. This conversation about how things might have been under different conditions is most relevant to which concept? Choose one from the following alternatives: overconfidence bias, optimistic bias, counterfactual thinking, confirmation bias, false consensus bias, reconstructive memory bias, planning fallacy, anchoring and adjustment, availability heuristic.

- They have also discussed these issues with family and friends, and Cole tends to be more outspoken than Kristin on these issues. Sometimes, though, he has found himself surprised while sharing his views about mask wearing that not many people have agreed with him. Which concept best explains his surprise here? Choose one from the following alternatives: overconfidence bias, optimistic bias, counterfactual thinking, confirmation bias, false consensus bias, reconstructive memory bias, planning fallacy, anchoring and adjustment, availability heuristic.

- Another issue they clashed on was around their children’s schooling in the Fall of 2020. In their local area, the government gave parents the option of either sending their children back to school full-time, or of enrolling in remote classes online. Kristin was leaning towards the latter option, as there had been a couple of cases at local schools when schools had reopened in the Summer of 2020. Cole pointed out that the number of cases linked to schools had been low overall, and they eventually decided to send their children back full-time. Although the number of outbreaks of schools remained low, Kristin continued to feel anxious, and assessed the risks as higher than they probably were. Which concept is most relevant here to her difficulty focusing on the low base rates of transmission in schools? Choose one from the following alternatives: overconfidence bias, optimistic bias, counterfactual thinking, confirmation bias, false consensus bias, reconstructive memory bias, planning fallacy, anchoring and adjustment, availability heuristic.

- Cole sometimes got frustrated at Kristin’s anxiety here, and told her that she wasn’t modifying her beliefs enough based on this more recent evidence. Which concept here was Kristin’s thinking most related to? Choose one from the following alternatives: overconfidence bias, optimistic bias, counterfactual thinking, confirmation bias, false consensus bias, reconstructive memory bias, planning fallacy, anchoring and adjustment, availability heuristic.

- Another common topic of conversation during the pandemic was about when it would be over. In the first few months, they were both hopeful that it would be under control some time in 2020. Like many people, they underestimated how long it and would take to control, and how many different resources would be needed. Which concept most clearly relates to this underestimation? Choose one from the following alternatives: overconfidence bias, optimistic bias, counterfactual thinking, confirmation bias, false consensus bias, reconstructive memory bias, planning fallacy, anchoring and adjustment, availability heuristic.

- Cole thought that life would return to some normalcy earlier than Kristin, reflecting his more positive outlook in general about what the future holds. Kristin has maintained that her more cautious view is more realistic, but Cole has countered that Kristin’s view is counterproductive. Which bias is most relevant here to their different dispositions here? Choose one from the following alternatives: overconfidence bias, optimistic bias, counterfactual thinking, confirmation bias, false consensus bias, reconstructive memory bias, planning fallacy, anchoring and adjustment, availability heuristic.

- A final contentious issue in their household was that of vaccination. Kristin was very keen to get a vaccination as soon as a vaccine became available, whereas Cole was more skeptical. When the vaccine was rolled out for their age group in their local area, they both got their first shot. Afterwards, Cole was saying that this would allow them to return to a more normal life, and start meeting up with more people. Kristin countered by saying that they would need to wait for their second shots, and probably beyond. They began arguing, with Cole saying that he was tired of Kristin living in fear, and her saying that he was being too reckless. In the end, they agreed to disagree on this point, but these tensions are likely to continue. Which concept best relates to Cole’s reaction to having his first shot here? Choose one from the following alternatives: overconfidence bias, optimistic bias, counterfactual thinking, confirmation bias, false consensus bias, reconstructive memory bias, planning fallacy, anchoring and adjustment, availability heuristic.

Key Takeaways

- We use our schemas and attitudes to help us judge and respond to others. In many cases, this is appropriate, but our expectations can also lead to biases in our judgments of ourselves and others.

- A good part of our social cognition is spontaneous or automatic, operating without much thought or effort. On the other hand, when we have the time and the motivation to think about things carefully, we may engage in thoughtful, controlled cognition.

- Which expectations we use to judge others is based on both the situational salience of the things we are judging and the cognitive accessibility of our own schemas and attitudes.

- Variations in the accessibility of schemas lead to biases such as the availability heuristic, the representativeness heuristic, the false consensus bias, biases caused by counterfactual thinking, and those elated to overconfidence.

- The potential biases that are the result of everyday social cognition can have important consequences, both for us in our everyday lives but even for people who make important decisions affecting many other people. Although biases are common, they are not impossible to control, and psychologists and other scientists are working to help people make better decisions.

- The operation of cognitive biases, including the potential for new information to distort information already in memory, can help explain the tendency for eyewitnesses to be overconfident and frequently inaccurate in their recollections of what occurred at crime scenes.

Exercises and Critical Thinking

- Give an example of a time when you may have committed one of the cognitive heuristics and biases discussed in this chapter. What factors (e.g., availability; salience) caused the error, and what was the outcome of your use of the shortcut or heuristic? What do you see as the general advantages and disadvantages of using this bias in your everyday life? Describe one possible strategy you could use to reduce the potentially harmful effects of this bias in your life.

- Go to the website The Hot Hand in Sports, which analyzes the extent to which people accurately perceive “streakiness” in sports. Based on the information provided on this site, as well as that in this chapter, in what ways might our sports perceptions be influenced by our expectations and the use of cognitive heuristics and biases?

- Different cognitive heuristics and biases often operate together to influence our social cognition in particular situations. Describe a situation where you feel that two or more biases were affecting your judgment. How did they interact? What combined effects on your social cognition did they have? Which of the heuristics and biases outlined in this chapter do you think might be particularly likely to happen together in social situations and why?

References

Aarts, H., & Dijksterhuis, A. (1999). How often did I do it? Experienced ease of retrieval and frequency estimates of past behavior. Acta Psychologica, 103(1–2), 77–89.

Abbes, M. B. (2012). Does overconfidence explain volatility during the global financial crisis? Transition Studies Review, 19(3), 291-312.

Adams, P. A., & Adams, J. K. (1960). Confidence in the recognition and reproduction of words difficult to spell. American Journal of Psychology, 73, 544–552.

Ariely, D. (2008). Predictably irrational: The hidden forces that shape our decisions. New York: Harper Perennial.

Ariely, D., Loewenstein, D., & Prelec, D. (2003). Coherent arbitrariness: Stable demand curves without stable preferences. Quarterly Journal of Economics 118 (1), 73–106.

Bargh, J. A., Chen, M., & Burrows, L. (1996). Automaticity of social behavior: Direct effects of trait construct and stereotype activation on action. Journal of Personality and Social Psychology, 71(2), 230–244.

Brewer, M. B. (1988). A dual process model of impression formation. In T. K. Srull & R. S. Wyer (Eds.), Advances in social cognition (Vol. 1, pp. 1–36). Hillsdale, NJ: Erlbaum.

Brigham, J. C., Bennett, L. B., Meissner, C. A., & Mitchell, T. L. (Eds.). (2007). The influence of race on eyewitness memory. Mahwah, NJ: Lawrence Erlbaum Associates Publishers.

Brown, D., Scheflin, A. W., & Hammond, D. C. (1998). Memory, trauma treatment, and the law. New York, NY: Norton.

Buehler, R., Griffin, D., & Peetz, J. (2010). The planning fallacy: Cognitive, motivational, and social origins. In M. P. Zanna, J. M. Olson (Eds.) , Advances in experimental social psychology, Vol 43 (pp. 1-62). San Diego, CA US: Academic Press. doi:10.1016/S0065-2601(10)43001-4

Byrne, R. M. J., & McEleney, A. (2000). Counterfactual thinking about actions and failures to act. Journal of Experimental Psychology: Learning, Memory, and Cognition, 26(5), 1318–1331.

Ceci, S. J., Huffman, M. L. C., Smith, E., & Loftus, E. F. (1994). Repeatedly thinking about a non-event: Source misattributions among preschoolers. Consciousness and Cognition: An International Journal, 3(3–4), 388–407.

Chambers, J. R. (2008). Explaining false uniqueness: Why we are both better and worse than others. Social and Personality Psychology Compass, 2(2), 878–894.

Chang, E. C., Asakawa, K., & Sanna, L. J. (2001). Cultural variations in optimistic and pessimistic bias: Do Easterners really expect the worst and Westerners really expect the best when predicting future life events?. Journal of Personality and Social Psychology,81(3), 476-491. doi:10.1037/0022-3514.81.3.476

Charman, S. D., & Wells, G. L. (2007). Eyewitness lineups: Is the appearance-changes instruction a good idea? Law and Human Behavior, 31(1), 3–22.

Chen, G., Kim, K. A., Nofsinger, J. R., & Rui, O. M. (2007). Trading performance, disposition effect, overconfidence, representativeness bias, and experience of emerging market investors. Journal of Behavioral Decision Making, 20(4), 425-451. doi:10.1002/bdm.561

Cosmides, L., & Tooby, J. (2000). Evolutionary psychology and the emotions. In M. Lewis & J. M. Haviland-Jones (Eds.), Handbook of emotions, 2nd edition (pp. 91-115). New York, NY: The Guilford Press.

Dijksterhuis, A., Bos, M. W., Nordgren, L. F., & van Baaren, R. B. (2006). On making the right choice: The deliberation-without-attention effect. Science, 311(5763), 1005–1007.

Dodson, C. S., Johnson, M. K., & Schooler, J. W. (1997). The verbal overshadowing effect: Why descriptions impair face recognition. Memory & Cognition, 25(2), 129–139.

Doob, A. N., & Macdonald, G. E. (1979). Television viewing and fear of victimization: Is the relationship causal? Journal of Personality and Social Psychology, 37(2), 170–179.

Dunning, D., & Perretta, S. (2002). Automaticity and eyewitness accuracy: A 10- to 12-second rule for distinguishing accurate from inaccurate positive identifications. Journal of Applied Psychology, 87(5), 951–962.

Dunning, D., Griffin, D. W., Milojkovic, J. D., & Ross, L. (1990). The overconfidence effect in social prediction. Journal of Personality and Social Psychology, 58(4), 568–581.

Dunning, D., Johnson, K., Ehrlinger, J., & Kruger, J. (2003). Why people fail to recognize their own incompetence. Current Directions in Psychological Science, 12(3), 83–87.

Egan, D., Merkle, C., & Weber, M. (in press). Second-order beliefs and the individual investor. Journal of Economic Behavior & Organization.

Ehrlinger J., Gilovich, T.D., & Ross, L. (2005). Peering into the bias blind spot: People’s assessments of bias in themselves and others. Personality and Social Psychology Bulletin, 31, 1-13.

Ferguson, M. J., & Bargh, J. A. (2003). The constructive nature of automatic evaluation. In J. Musch & K. C. Klauer (Eds.), The psychology of evaluation: Affective processes in cognition and emotion (pp. 169–188). Mahwah, NJ: Lawrence Erlbaum Associates Publishers.

Ferguson, M. J., Hassin, R., & Bargh, J. A. (2008). Implicit motivation: Past, present, and future. In J. Y. Shah & W. L. Gardner (Eds.), Handbook of motivation science (pp. 150–166). New York, NY: Guilford Press.

Fisher, R. P. (2011). Editor’s introduction: Special issue on psychology and law. Current Directions in Psychological Science, 20, 4. doi:10.1177/0963721410397654

Forgeard, M. C., & Seligman, M. P. (2012). Seeing the glass half full: A review of the causes and consequences of optimism.Pratiques Psychologiques, 18(2), 107-120. doi:10.1016/j.prps.2012.02.002

Gigerenzer, G. (2004). Fast and frugal heuristics: The tools of founded rationality. In D. J. Koehler & N. Harvey (Eds.), Blackwell handbook of judgment and decision making (pp. 62-88). Malden, MA: Blackwell Publishing.

Gigerenzer, G. (2006). Out of the frying pan and into the fire: Behavioral reactions to terrorist attacks. Risk Analysis, 26, 347-351.

Gilovich, T., Griffin, D., & Kahneman, D. (Eds.). (2002). Heuristics and biases: The psychology of intuitive judgment. New York, NY: Cambridge University Press.

Haddock, G., Rothman, A. J., Reber, R., & Schwarz, N. (1999). Forming judgments of attitude certainty, intensity, and importance: The role of subjective experiences. Personality and Social Psychology Bulletin, 25, 771–782.

Heine, S. J., Lehman, D. R., Markus, H. R., & Kitayama, S. (1999). Is there a universal need for positive self-regard? Psychological Review, 106(4), 766-794. doi: 10.1037/0033-295X.106.4.766

Hilton, D. J. (2001). The psychology of financial decision-making: Applications to trading, dealing, and investment analysis. Journal of Behavioral Finance, 2, 37–53. doi: 10.1207/S15327760JPFM0201_4

Hirt, E. R., Kardes, F. R., & Markman, K. D. (2004). Activating a mental simulation mind-set through generation of alternatives: Implications for debiasing in related and unrelated domains. Journal of Experimental Social Psychology, 40(3), 374–383.

Hsee, C. K., Hastie, R., & Chen, J. (2008). Hedonomics: Bridging decision research with happiness research. Perspectives On Psychological Science, 3(3), 224-243. doi:10.1111/j.1745-6924.2008.00076.x

Joireman, J., Barnes Truelove, H., & Duell, B. (2010). Effect of outdoor temperature, heat primes and anchoring on belief in global warming. Journal of Environmental Psychology, 30(4), 358–367.

Kahneman, D. (2011). Thinking fast and slow. New York: Farrar, Strauss, Giroux.

Kassam, K. S., Koslov, K., & Mendes, W. B. (2009). Decisions under distress: Stress profiles influence anchoring and adjustment. Psychological Science, 20(11), 1394–1399.

Krueger, J., & Clement, R. W. (1994). The truly false consensus effect: An ineradicable and egocentric bias in social perception. Journal of Personality and Social Psychology, 67(4), 596–610.

Kruger, J., & Dunning, D. (1999). Unskilled and unaware of it: How difficulties in recognizing one’s own incompetence lead to inflated self-assessments. Journal of Personality and Social Psychology, 77(6), 1121–1134.

Kruglanski, A. W., & Freund, T. (1983). The freezing and unfreezing of lay inferences: Effects on impressional primacy, ethnic stereotyping, and numerical anchoring. Journal of Experimental Social Psychology, 19, 448–468.

Lehman, D. R., Lempert, R. O., & Nisbett, R. E. (1988). The effects of graduate training on reasoning: Formal discipline and thinking about everyday-life events. American Psychologist, 43(6), 431–442.

Loewenstein, G. F., Weber, E. U., Hsee, C. K., & Welch, N. (2001). Risk as feelings. Psychological Bulletin, 127(2), 267–286.